What Is an Agentic Lakehouse?

What Is an Agentic Lakehouse?

The Agentic Lakehouse is the massive architectural evolution of the standard Data Lakehouse, completely redesigned and heavily augmented to act as the native operational foundation for autonomous Artificial Intelligence Agents. While traditional Data Lakehouses were engineered specifically for human beings (rendering Business Intelligence dashboards or Excel extracts), human beings possess intrinsic, common-sense reasoning. An AI Agent does not. If an autonomous Agent is deployed to investigate a drop in corporate revenue and is pointed at a raw Amazon S3 bucket containing 10,000 chaotic, poorly named Apache Parquet files, the Agent will hallucinate catastrophically, joining the wrong tables and reporting totally fictional metrics.

The Agentic Lakehouse completely solves this catastrophic failure by wrapping the massive, scalable storage of the open data lake in a highly strict, machine-readable Semantic Layer and a highly secure, mathematically enforced Governance Engine. You do not simply give an Agent a database password; you give the Agent a fully structured, curated, and context-rich environment where mathematically correct reasoning is physically guaranteed by the architecture.

The Architecture of the Agentic Lakehouse

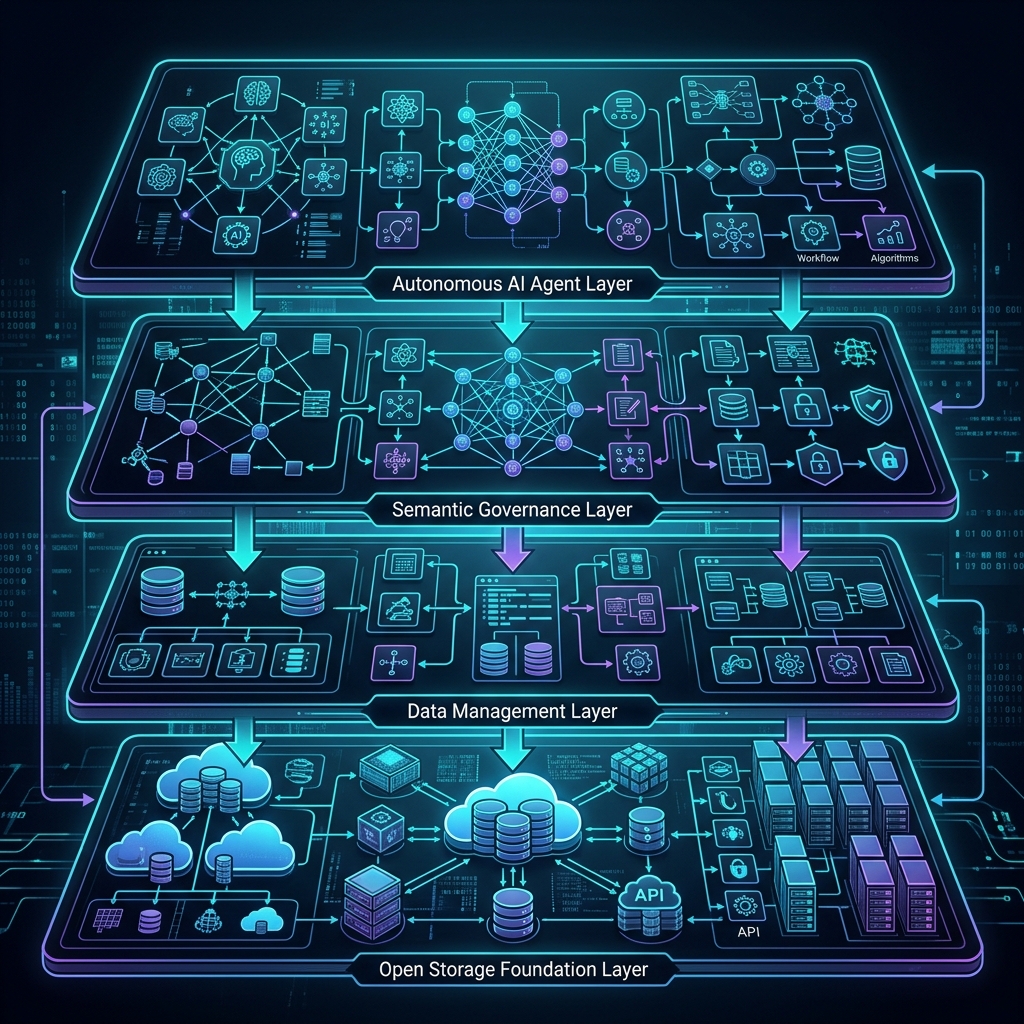

To transition a standard Lakehouse into an Agentic Lakehouse, architects must implement four highly specific architectural layers explicitly designed for machine consumption.

1. The Contextual Semantic Layer

The absolute core of the Agentic Lakehouse. Data engineers explicitly define complex business logic (e.g., the exact mathematical formula for Gross_Margin or Churn_Rate) directly inside the engine’s Semantic Layer.

When the AI Agent connects to the Lakehouse, it does not see raw files. It sees pristine, logically verified virtual datasets. The Semantic Layer provides the Agent with an explicit Data Dictionary and API definitions, preventing the Agent from having to guess the relationships between primary and foreign keys. This logically isolates the AI from the chaos of the raw data.

2. Governed Execution and Trust

Deploying autonomous AI with write-access to corporate data is an existential security threat. If an Agent enters an infinite loop, it could autonomously execute a trillion queries or drop a production table. The Agentic Lakehouse enforces absolute isolation. It utilizes highly aggressive, attribute-based access control (ABAC). The Agent is strictly bound to a limited Service Account. If the Agent attempts an unauthorized action (like dropping a production table or reading restricted PII data), the Lakehouse engine intercepts the request and instantly terminates the query. It mathematically guarantees that an AI hallucination cannot destroy the enterprise. Furthermore, every single query the AI executes is cryptographically logged in an immutable Audit Log for forensic review.

3. Integrated Vector and Structured Search

A true Agentic Lakehouse does not force the AI to utilize two completely different databases (a traditional SQL database for numbers and a separate isolated Vector Database for text). The Agentic Lakehouse natively supports multimodal operations. The AI Agent can write a single, complex SQL query that mathematically joins structured financial data (Apache Iceberg) with unstructured semantic vectors, executing highly complex RAG (Retrieval-Augmented Generation) entirely within the massive, unified Lakehouse architecture. This eliminates the catastrophic pipeline fragility of synchronizing a lakehouse with an external vector store.

4. Open API Tooling (Model Context Protocol)

AI Agents cannot type on a keyboard. They communicate via explicit JSON function calls. The Agentic Lakehouse provides native, highly robust API endpoints (such as those defined by the open-source Model Context Protocol) that allow the AI to safely execute queries, read schema definitions, and poll asynchronous analytical jobs with absolute, deterministic reliability. The API serves as a strict mathematical contract; if the AI formats a request incorrectly, the API returns a structured error, triggering the AI’s autonomous self-correction loop.

The Agentic Workflow Example

To understand the value of this architecture, consider a standard corporate workflow:

- The Request: An executive asks the corporate Agent, “Why did our supply chain costs spike in Europe last quarter?”

- The Translation: The Agent connects to the Semantic Layer via the Model Context Protocol. It reads the definition for

supply_chain_costsand realizes it needs to query thelogisticsandfuel_surchargesvirtual datasets. - The Execution: The Agent autonomously writes the SQL. The Lakehouse Governance Engine verifies the Agent has permission to read the European region and dynamically masks any driver PII data.

- The Synthesis: The Agent receives the raw numerical output, cross-references it with internal logistics vector embeddings (RAG) to find recent union strike reports, and generates a cohesive, mathematically verified natural language response for the executive.

Summary of Technical Value

The Agentic Lakehouse represents the final synthesis of Data Engineering and Artificial Intelligence. By explicitly wrapping the massive scale and cheap storage of the Open Data Lakehouse in a highly strict, mathematically sound Semantic Layer, organizations can safely deploy highly autonomous, self-reasoning AI Agents capable of executing flawless, petabyte-scale analytical investigations without the risk of hallucination, data chaos, or catastrophic security breaches.

Learn More

To learn more about the Data Lakehouse, read the book “Lakehouse for Everyone” by Alex Merced. You can find this and other books by Alex Merced at books.alexmerced.com.